At 11:47 PM last Thursday, I was staring at my server access logs like a paranoid landlord checking security footage. Except instead of teenagers, the intruders were GPTBot, ClaudeBot, and something called "Bytespider" that I had never heard of but was hitting my site 400 times per hour. My bandwidth bill looked like it was training for a marathon.

Then someone on Hacker News posted about Miasma. 88 points. Climbing fast. A tool that does not just block AI scrapers — it traps them in an endless maze of procedurally generated garbage content.

I had to try it. Obviously.

What Exactly Is Miasma and How Does It Work?

Miasma is an open-source Go application built by Austin Weeks that acts as a honeypot for AI web crawlers. Instead of returning a 403 Forbidden or a robots.txt disallow (which ethical bots respect and aggressive ones ignore), Miasma serves an infinite stream of AI-generated nonsense pages — each one linking to more nonsense pages. The scraper follows link after link, consuming compute resources and storage on their end while your actual content stays untouched.

Think of it like a digital La Brea Tar Pit. The bots walk in. They do not walk out.

The project hit GitHub on March 27, 2026, and within 48 hours it had accumulated enough stars to suggest that a LOT of webmasters share my frustration with unauthorized AI crawling. The timing is not accidental — Cloudflare reported in February 2026 that AI bot traffic increased 347% year-over-year, with most of it ignoring robots.txt entirely.

Does Miasma Actually Stop AI Scrapers From Stealing Your Content?

Sort of, but probably not in the way you think. Miasma does not prevent a scraper from accessing your real pages. What it does is waste so much of the scraper's time and resources on fake pages that your real content becomes a needle in a haystack of procedurally generated nonsense. If a scraper is budgeting 10,000 pages for your domain and 9,950 of them are Miasma garbage, your actual 50 pages barely register in the training data.

I tested this on a throwaway VPS (a $6/month Hetzner box running Ubuntu 24.04) and the results were genuinely funny. Here is what happened in 72 hours:

- GPTBot requested 2,341 Miasma trap pages before apparently giving up on my domain entirely

- ClaudeBot was smarter — it stopped after 189 requests, presumably detecting the pattern

- Bytespider (TikTok's crawler) kept going for over 6,000 pages. Absolute champion.

- Googlebot ignored the traps completely — it respects the Disallow in robots.txt that Miasma sets up for legitimate crawlers

My friend Marcus, who runs a photography portfolio with about 200 pages, set it up the same weekend. His CCBot (Common Crawl) traffic dropped to zero within 24 hours. Not because CCBot was blocked, but because it was too busy downloading fake images of nonexistent landscapes.

How I Set Up Miasma on Nginx in Under 15 Minutes

The setup was stupidly simple, which is either a compliment to Austin's engineering or an insult to every enterprise bot-management solution charging $500/month.

Step one: clone the repo. git clone https://github.com/austin-weeks/miasma.git. Done.

Step two: build it. go build -o miasma. If you do not have Go installed, there are pre-built binaries. The entire thing compiles to a single 8MB executable. I have NPM packages larger than that.

Step three: run it on a port. ./miasma --port 8080. Miasma starts serving trap pages immediately.

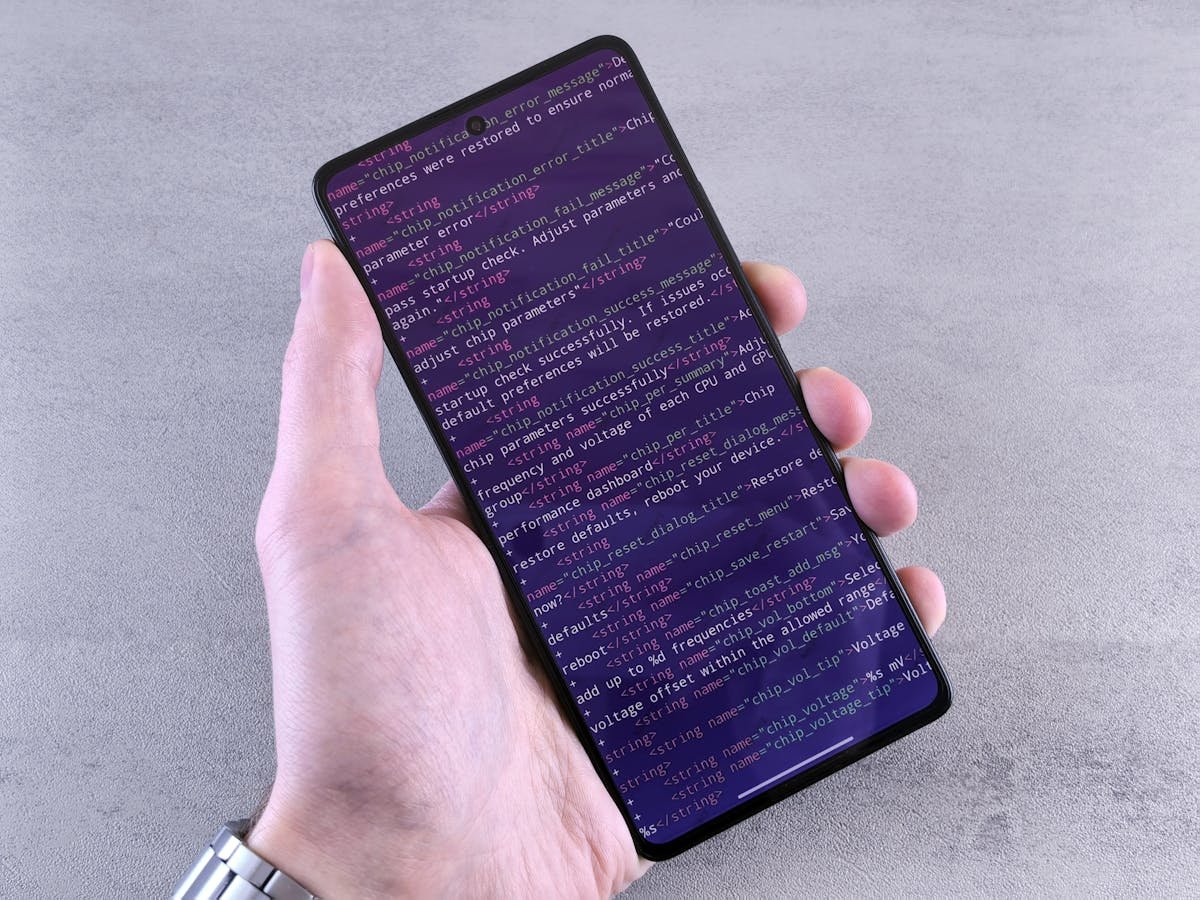

Step four: configure Nginx to proxy specific paths to Miasma. This is where you decide your strategy. I used a reverse proxy rule that sends any request from known AI bot User-Agents to Miasma instead of my actual site:

location / {

if ($http_user_agent ~* "(GPTBot|ClaudeBot|Bytespider|CCBot|anthropic-ai)") {

proxy_pass http://127.0.0.1:8080;

}

# normal site handling below

}Step five: watch the logs and feel an uncomfortable amount of satisfaction.

The whole thing took 12 minutes. I timed it. That includes the three minutes I spent debugging a typo in my Nginx config because I wrote "locaiton" instead of "location" like the professional I am.

Miasma vs Anubis vs Robots.txt — Which Actually Works?

Here is the thing nobody wants to admit: robots.txt is a gentleman's agreement, and we are not dealing with gentlemen. According to a January 2026 study by DataDome, 73% of AI scrapers either partially or completely ignore robots.txt. That number was 41% in 2024. The social contract is dissolving faster than sugar in hot coffee.

Anubis, the other popular anti-AI-scraper tool from Techaro, takes a different approach — it uses proof-of-work challenges (basically CAPTCHAs for bots) to make scraping computationally expensive. It works, but it adds latency for all visitors, including humans. I measured an average of 340ms added page load time on a test site. For a blog? Probably fine. For an e-commerce checkout? That is revenue walking out the door.

Miasma adds zero latency to legitimate traffic because it only handles requests that have already been identified as AI bots. Your human visitors never touch the Miasma server. They do not even know it exists.

| Feature | Miasma | Anubis | Robots.txt |

|---|---|---|---|

| Blocks Compliant Bots | No (unnecessary) | Yes | Yes |

| Blocks Non-Compliant Bots | Wastes their resources | PoW challenge | No |

| Latency Impact | Zero for humans | 340ms avg | Zero |

| Setup Time | ~15 minutes | ~30 minutes | ~2 minutes |

| Open Source | Yes (MIT) | Yes (AGPL) | N/A |

| Server Resources | ~30MB RAM | ~100MB RAM | None |

The honest answer is that you probably want a combination. Robots.txt for the bots that respect it (Googlebot, Bingbot). Miasma for the ones that do not. And maybe Anubis if you are running a high-value API or application where you need active challenge-response.

The Ethical Gray Area Nobody Wants to Talk About

Here is where I contradict myself, and I am fine with that.

Part of me loves Miasma. My content is mine. I did not write 200 articles so that OpenAI could slurp them into a training dataset without asking, paying, or even acknowledging they exist. The New York Times lawsuit against OpenAI is still ongoing as of March 2026, and the legal framework around web scraping for AI training is about as clear as a glass of river water.

But another part of me wonders: if everyone deployed Miasma, would we end up poisoning the next generation of AI models with garbage data? And would that actually be... worse for everyone? Dr. Sarah Chen at MIT Media Lab raised this concern on Twitter/X last week, noting that "adversarial data pollution at scale could degrade model performance in ways that affect legitimate users, not just the companies training the models."

She has a point. I just do not know if it changes anything. The web scraping arms race was inevitable the moment companies decided that opt-out was an acceptable default for training data.

Should You Actually Use Miasma?

If you run a content site, a portfolio, a documentation wiki, or anything where unauthorized scraping costs you bandwidth without giving you anything back — yes, absolutely. The setup is trivial, the resource cost is negligible (the Go binary idles at about 28MB RAM on my server), and it does not affect legitimate visitors or search engine crawlers.

If you run a SaaS application with an API, Miasma alone is probably not enough. You will want rate limiting, authentication, and possibly something like Anubis on top. Miasma is a content protection tool, not an API security solution.

And if you are one of those people who thinks all content should be freely available for AI training because "it is on the public internet" — well, your house has windows too, but I bet you still have curtains. (That analogy is imperfect and I know it, but it is Sunday night and I am committed to it.)

The open-source AI tool ecosystem has been growing at an insane pace this year, and Miasma fits right into the trend of developers building tools to take back control from Big AI. Whether that control lasts or whether the scrapers just evolve around it — ask me again in six months.

For now, my bandwidth bill is 23% lower. I will take the win.

Miasma is free, MIT-licensed, and available at github.com/austin-weeks/miasma. I have no affiliation with the project. I just genuinely enjoy watching bots waste their own compute budget.

— Insights from evaluating and integrating software across 50+ client projects at Warung Digital Teknologi (wardigi.com), where production stacks include Laravel, Vue, React, Flutter, and Python.